When you add a new data source to Metron, the first step is to decide how to push the events from the new telemetry data source into Metron. You can use a number of data collection tools and that decision is decoupled from Metron. An excellent tool for pushing data into Metron is Apache NiFi which this section will describe how to use. The second step is to configure Metron to parse the telemetry data source so that downstream processing can be done on it. In this article we will walk you through how to perform both of these steps.

In the previous section, Setting up the Use Case, we described the following set of requirements for Customer Foo who wanted to add the Squid telemetry data source into Metron.

In this article, we will walk you through how to perform steps 1, 2, and 6.

You will need to install Metron first. Today, there are three options to install Metron: Metron Installation Options. The following instructions should be applicable to all three install options given the following variables that you will need to plug in with your own values:

The following steps guide you through how to add this new telemetry.

sudo yum install squid sudo service squid start

sudo su - cd /var/log/squid ls

You see that there are three types of logs available: access.log, cache.log, and squid.out. We are interested in access.log because that is the log that records the proxy usage.

squidclient -h 127.0.0.1 "http://www.aliexpress.com/af/shoes.html?ltype=wholesale&d=y&origin=n&isViewCP=y&catId=0&initiative_id=SB_20160622082445&SearchText=shoes"

squidclient -h 127.0.0.1 "http://www.help.1and1.co.uk/domains-c40986/transfer-domains-c79878"

squidclient -h 127.0.0.1 "http://www.pravda.ru/science/"

squidclient -h 127.0.0.1 "http://www.brightsideofthesun.com/2016/6/25/12027078/anatomy-of-a-deal-phoenix-suns-pick-bender-chriss"

squidclient -h 127.0.0.1 "https://www.microsoftstore.com/store/msusa/en_US/pdp/Microsoft-Band-2-Charging-Stand/productID.329506400"

squidclient -h 127.0.0.1 "https://tfl.gov.uk/plan-a-journey/"

squidclient -h 127.0.0.1 "https://www.facebook.com/Africa-Bike-Week-1550200608567001/"

squidclient -h 127.0.0.1 "http://www.ebay.com/itm/02-Infiniti-QX4-Rear-spoiler-Air-deflector-Nissan-Pathfinder-/172240020293?fits=Make%3AInfiniti%7CModel%3AQX4&hash=item281a4e2345:g:iMkAAOSwoBtW4Iwx&vxp=mtr"

squidclient -h 127.0.0.1 "http://www.recruit.jp/corporate/english/company/index.html"

squidclient -h 127.0.0.1 "http://www.lada.ru/en/cars/4x4/3dv/about.html"

squidclient -h 127.0.0.1 "http://www.help.1and1.co.uk/domains-c40986/transfer-domains-c79878"

squidclient -h 127.0.0.1 "http://www.aliexpress.com/af/shoes.html?ltype=wholesale&d=y&origin=n&isViewCP=y&catId=0&initiative_id=SB_20160622082445&SearchText=shoes"

In production environments you would configure your users web browsers to point to the proxy server. But for the sake of simplicity of this tutorial, we will use the client that is packaged with the Squid installation. After we use the client to simulate proxy requests, the Squid log entries should look as follows:

1467011157.401 415 127.0.0.1 TCP_MISS/200 337891 GEThttp://www.aliexpress.com/af/shoes.html? - DIRECT/207.109.73.154 text/html

1467011158.083 671 127.0.0.1 TCP_MISS/200 41846 GEThttp://www.help.1and1.co.uk/domains-c40986/transfer-domains-c79878 - DIRECT/212.227.34.3 text/html

1467011159.978 1893 127.0.0.1 TCP_MISS/200 153925 GEThttp://www.pravda.ru/science/ - DIRECT/185.103.135.90 text/html

timestamp | time elapsed | remotehost | code/status | bytes | method | URL rfc931 peerstatus/peerhost | type

Create a Kafka topic called squid.

/usr/hdp/current/kafka-broker/bin/kafka-topics.sh --zookeeper $ZOOKEEPER_HOST:2181 --create --topic squid --partitions 1 --replication-factor 1

/usr/hdp/current/kafka-broker/bin/kafka-topics.sh --zookeeper $ZOOKEEPER_HOST:2181 --list

You should see the following list of Kafka topics:

bro

enrichment

pcap

snort

squid

yaf

Now we are ready to tackle the Metron parsing topology setup.

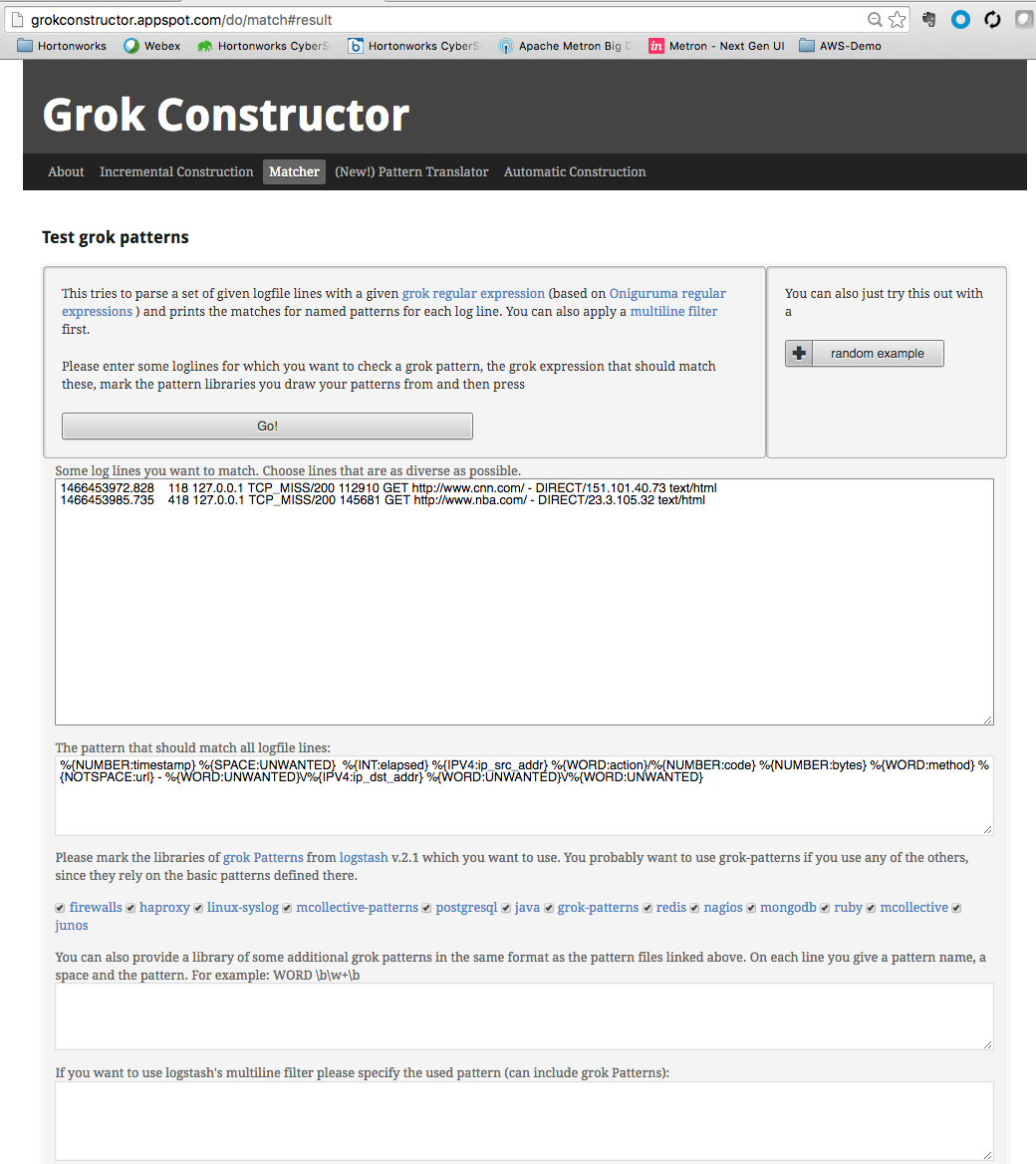

SQUID_DELIMITED %{NUMBER:timestamp}.*%{INT:elapsed} %{IP:ip_src_address} %{WORD:action}/%{NUMBER:code} %{NUMBER:bytes} %{WORD:method} %{NOTSPACE:url}.*%{IP:ip_dst_addr}

If you do not want to include any part of the message in the resulting JSON structure, you can apply the UNWANTED tag to that section.

Finally, notice that we applied the naming convention to the IPV4 field by referencing the following list of field conventions.

Create a file called "squid" in the tmp directory and copy the Grok pattern into the file.

touch /tmp/squid

Put the Squid file into the directory where Metron stores its Grok parsers. Existing Grok parsers that ship with Metron are staged under /apps/metron/pattern.

su - hdfs

hadoop fs -rm -r /apps/metron/patterns/squid

hdfs dfs -put /tmp/squid /apps/metron/patterns/

Now that the Grok pattern is staged in HDFS, we need to define a parser configuration for the Metron Parsing Topology. The configurations are kept in Zookeeper so the sensor configuration must be uploaded there after it has been created.

touch /usr/metron/$METRON_VERSION/config/zookeeper/parsers/squid.json

{

"parserClassName": "org.apache.metron.parsers.GrokParser",

"sensorTopic": "squid",

"parserConfig": {

"grokPath": "/apps/metron/patterns/squid",

"patternLabel": "SQUID_DELIMITED",

"timestampField": "timestamp"

},

"fieldTransformations" : [

{

"transformation" : "STELLAR"

,"output" : [ "full_hostname", "domain_without_subdomains" ]

,"config" : {

"full_hostname" : "URL_TO_HOST(url)"

,"domain_without_subdomains" : "DOMAIN_REMOVE_SUBDOMAINS(full_hostname)"

}

}

]}

All parser configurations are stored in Zookeeper. Use the following script to upload configurations to Zookeeper:

/usr/metron/$METRON_VERSION/bin/zk_load_configs.sh --mode PUSH -i /usr/metron/$METRON_VERSION/config/zookeeper -z $ZOOKEEPER_HOST:2181

Note: You might receive the following warning messages when you execute the previous command. You can safely ignore these warning messages.

log4j:WARN No appenders could be found for logger (org.apache.curator.framework.imps.CuratorFrameworkImpl).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Next you might want to configure your sensor's indexing. The indexing topology takes the data from a topology that has been enriched and stores the data in one or more supported indices.

You can choose not to configure a sensor's indexing and use the default values. If you leave the writer configuration unspecified, you will see a warning similar to the following in the Storm console: WARNING: Default and (likely) unoptimized writer config used for hdfs writer and sensor squid. You can ignore this warning message if you intend to use the default configuration.

To configure a sensor's indexing:

1. Create a file called squid.json at /usr/metron/$METRON_VERSION/config/zookeeper/indexing/:

touch $METRON_HOME/config/zookeeper/indexing/squid.json

2. Populate it with the following:

{

"elasticsearch": {

"index": "squid",

"batchSize": 5,

"enabled" : true

},

"hdfs":{

"index": "squid",

"batchSize": 5,

"enabled" : true

}}

This file sets the batch size of 5 and the index name to squid for both the Elasticsearch and HDFS writers.

3. Push the configuration to ZooKeeper:

/usr/metron/$METRON_VERSION/bin/zk_load_configs.sh --mode PUSH -i /usr/metron/$METRON_VERSION/config/zookeeper -z $ZOOKEEPER_HOST:2181

vi /usr/metron/$METRON_VERSION/config/zookeeper/global.json

"fieldValidations" : [

{

"input" : [ "ip_src_addr", "ip_dst_addr" ],

"validation" : "IP",

"config" : {

"type" : "IPV4"

}

}

]

Push the global configuration to Zookeeper:

/usr/metron/$METRON_VERSION/bin/zk_load_configs.sh -i /usr/metron/$METRON_VERSION/config/zookeeper -m PUSH -z $ZOOKEEPER_HOST:2181

Dump the configs and validate that were persisted:

/usr/metron/$METRON_VERSION/bin/zk_load_configs.sh -m DUMP -z $ZOOKEEPER_HOST:2181

Note: You might receive the following warning messages when you execute the previous command. You can safely ignore these warning messages.

log4j:WARN No appenders could be found for logger (org.apache.curator.framework.imps.CuratorFrameworkImpl).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

The below describes the validation configuration you see above.

More details on the validation framework can be found in the Validation Framework section: https://github.com/apache/incubator-metron/tree/master/metron-platform/metron-common#transformation-language

Log into HOST $HOST_WITH_ENRICHMENT_TAG as root.

/usr/metron/$METRON_VERSION/bin/start_parser_topology.sh -k $KAFKA_HOST:6667 -z $ZOOKEEPER_HOST:2181 -s squid

Put simply NiFi was built to automate the flow of data between systems. Hence it is a fantastic tool to collect, ingest, and push data to Metron.

The following instructions define how to install configure and create the NiFi flow to push Squid events into Metron.

The following steps show how to install NiFi. Perform the following as root:

cd /usr/lib wget http://public-repo-1.hortonworks.com/HDF/centos6/1.x/updates/1.2.0.0/HDF-1.2.0.0-91.tar.gz tar -zxvf HDF-1.2.0.0-91.tar.gz

cd HDF-1.2.0.0/nifi vi conf/nifi.properties //update nifi.web.http.port to 8089

bin/nifi.sh install nifi

service nifi start

Now we will create a flow to capture events from Squid and push them into Metron.

squidclient -h 127.0.0.1 "http://www.cnn.com"

By convention, the index where the new messages will be indexed is called squid_index_[timestamp] and the document type is squid_doc.

In order to verify that the messages were indexed correctly, we can use the elastic search Head plugin.

usr/share/elasticsearch/bin/plugin install mobz/elasticsearch-head/1.x