Current implementation

Base operations

Replication is meant to transpose a modification done on one server into the associated servers. We should also insure that a modification done on an entry in more than one server does not lead to inconsistencies.

As the remote servers may not be available, due to network conditions, we also have to wait for the synchronization to be done before we can validate a full replication for an entry. For instance, if we delete an entry on server A, it can be deleted for real only when all the remote servers has confirmed that the deletion was successful.

CSN and UUID

We will use two tags, stored within each entry, to manage the replication. The CSN (Change Sequence Number) is used to tell that an entry is owned by a specific server. A replicated entry on 3 servers will have 3 distinct CSN. The UUID (Universal Unique Identifier) is associated with an entry, and only one. So if we have an entry replicated on 3 servers, it will have 3 CSN (one per server) and only one *UUID (as it's the same entry).

Configuration

The replication system is a Multi-Master replication, ie, each server can update any server it is connected to. The way you tell a server to replicate to others is simple :

<replicationInterceptor>

<configuration>

<replicationConfiguration logMaxAge="5"

replicaId="instance_a"

replicationInterval="2"

responseTimeout="10"

serverPort="10390">

<s:property name="peerReplicas">

<s:set>

<s:value>instance_b@localhost:1234</s:value>

<s:value>instance_c@localhost:1234</s:value>

</s:set>

</s:property>

</replicationConfiguration>

</configuration>

</replicationInterceptor>

Here, for the server instance_a" we have associated two replicas : *instance_b and instance_c. Basically, you just give the list of remote server you want to be connected to.

The replication interceptor

The MITOSIS service is implemented as an interceptor in the current version (1.5.4). The following operations are handled :

- add

- delete

- hasEntry

- list

- lookup

- modify

- move

- moveAndRename

- rename

- search

Operations

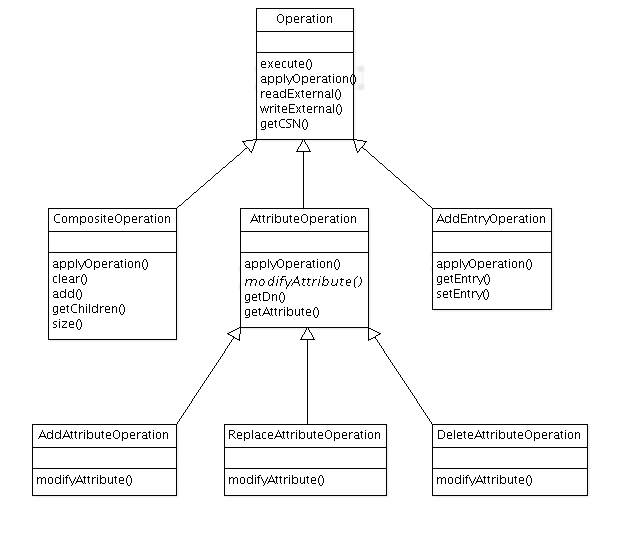

We are using *Operationµ objects to manage replication inside the interceptor. Here is the *Operationµ classes hierarchy :

Add operation

It creates a AddEntryOperation object, with a ADD_ENTRY operation type (how useful is it, considering that we are already defined a specific class for such an operation ???), an entry and a CSN.

The newly created entry will contain two new AttributeType :

- an entryUUID with a newly generated UUID

- an entryDeleted set to FALSE

If the added entry already exists in the current server, then we should consider that the entry can't be added.

Currently, we check for more than the existence of the entry in the base. Either the entry is absent, and we can add it, or it's present, and we should discard the new entry, throwing an error.

Or another option is to consider that the entry has been created on more than one remote server, and then been created locally. We may have to replace the old entry by the new one, even if they are different. This is the current implementation.

What if the entry already exists, but with a pending 'deleted' state ? This has to be checked.

As we may receive a Add request from a remote server - per replication activation -, we currently create so called glue-entries. There are necessary if we consider that an entry is added when the underlaying tree is absent. It does not make a lot of sense either, because the tree have necessarily been created on the remote server, and the associated created entries have already been transmitted to the local server, thus we don't have to create a glue entry.

Delete operation

It creates a CompositeOperation object, which contains a ReplaceAttributeOperation, as the entry is not deleted, but instead a entryDeleted AttributeType is added to the entry, and a ReplaceAttributeOperation containing the injection of a entryCSN AttributeType, with a newly created CSN.

So here are the operation content :

- ReplaceAttributeOperation

- entryDeleted, value TRUE

- ReplaceAttributeOperation

- entryCSN, with a new CSN

The delete operation should be a simple attribute Modification. Currently, two requests are sent to the backend (one for each added attribute), which is useless.