Problem

Potential Data Loss

When we take a bookie out of the cluster, we have no idea of whether doing so would cause any quorum loss or not.

For example, if we've already lost or removed a few bookies, the auto rereplicator might be still replicating the ledgers for the lost bookies or even already failed to replicate some ledgers. Now we want to remove another bookie, and to make sure we don't lose any data, we need to know whether the ledgers on the lost bookies which shares a quorum with this bookie have all been replicated or not. However, there's no bookie lifecycle state which could indicate whether the bookie is still being replicating or already replicated. So removing a bunch of bookies too quickly would potentially cause some quorum loss.

Situations like this are listed but not limited to:

- Scale down the cluster

- OS migration (need reinstall)

- Move bookies to a different cluster

- Frequent bad disks

Expensive Operation & Lack of Automation

Currently, to remove a few bookies out of cluster, we have to do a lot of manual operations:

- Kill the bookie

- Run the manual rereplicator / auto rereplicator

- Wait for rereplication done for that bookie

- Remove the cookie for that bookie

- Remove the bookie out of the cluster

- Start killing the next bookie

The manual operation for a single bookie is not that bad, but when we are dealing with hundreds and thousands of bookies, the operations will become a pain. And there's currently no easy way to automate the whole process because we need to do a lot of manual checks to prevent data loss as much as we could.

However, if we introduce lifecycle state and add a HTTP management interface, we're able to automate the whole lifecycle for bookkeeper operation, and we would get a lot of benefits:

- Bookkeeper on cloud can be auto-scaled

- Save engineer time doing operations

- HTTP endpoint would be easier to interact with

- Provide the possibility to add an admin dashboard for Bookkeeper cluster

Design

Bookkeeper Lifecycle State

- Active

- Draining

- Draining Failed

- Drained

Active

An active bookie is a bookie that has been registered in the cluster and is ready to serve the traffic at any time. A bookie that is in active state doesn’t necessary mean that the bookie is running. An active bookie could be a running bookie serving read and write traffic, a read only bookie, or even a bookie registered but not running the server.

Draining

A draining bookie is a bookie that we're going to retire or remove from the cluster. If a bookie is in draining state, it should either serve in read-only mode or do not serve any traffic. Bookie can only transition from active state to draining state. When a bookie transition to draining state, Auditor should detect / be notified about the state change and start the rereplication for that bookie.

Draining-Failed

If the bookie is in draining state, the draining process would either succeed or failed. When we tried as best as we could but still not able to replicate all the ledgers on the draining bookie, the bookie should transition into draining-failed state. Once a bookie is in draining-failed state, auto rereplicators should stop replicating the bookie. A draining-failed bookie can still serve read traffic but no write traffic. Usually a draining-failed bookie should not transition into any other state automatically, because it indicates that some ledgers on that bookie can't be replicated, thus removing that bookie might potentially cause data loss.

For those draining-failed bookies, we should manually take care of them, we can move the state into the drained state only after fixing the "bad" ledgers. But there's a concern that if we have a lot of draining-failed bookie, there would be a lot of manual work, which would be conflicted with the goal of automating the whole lifecycle process. So in the future, we might need a better solution or add some strict rules to auto-fix the "bad" ledgers.

Drained

A bookie will turn into drained state from draining state once all its ledgers have been replicated successfully. Drained state indicates that the bookie is now safe to be removed from the cluster since we guarantee that all ledgers on that bookie has been replicated successfully and removing the bookie wouldn't cause any data loss. Drained bookie should only serve read traffic, no write traffic.

Where do we persist lifecycle state?

There're basically two options:

- In Zookeeper (Metadata Store)

- On Ledger disk / Journal disk

We prefer to store the lifecycle state on Zookeeper because we need to access and manage the lifecycle state even when the bookie host is not available. If we store the state on disk, when the bookie have bad disk or is somehow not reachable, we would lose the capability to access the lifecycle state of that bookie.

In Zookeeper, we can create a znode for the lifecycle state under the cookie znode to store the state data: cookies/<ip:port>/state

How do we manage lifecycle state?

- Default: Active

- Active -> Draining: We can build a tool to modify the state in Zookeeper, or we can set it through an HTTP endpoint once we have it

- Draining -> Draining-Failed: Auditor change the state in ZK when it detects not all ledgers can be replicated successfully

- Draining-Failed -> Drained: We manually set the state using a tool or through HTTP endpoint, or we can try to make auditor smart enough to handle all kinds of replication failures

- Draining -> Drained: Auditor change the state in ZK when it detects all ledgers for the bookie have been replicated successfully

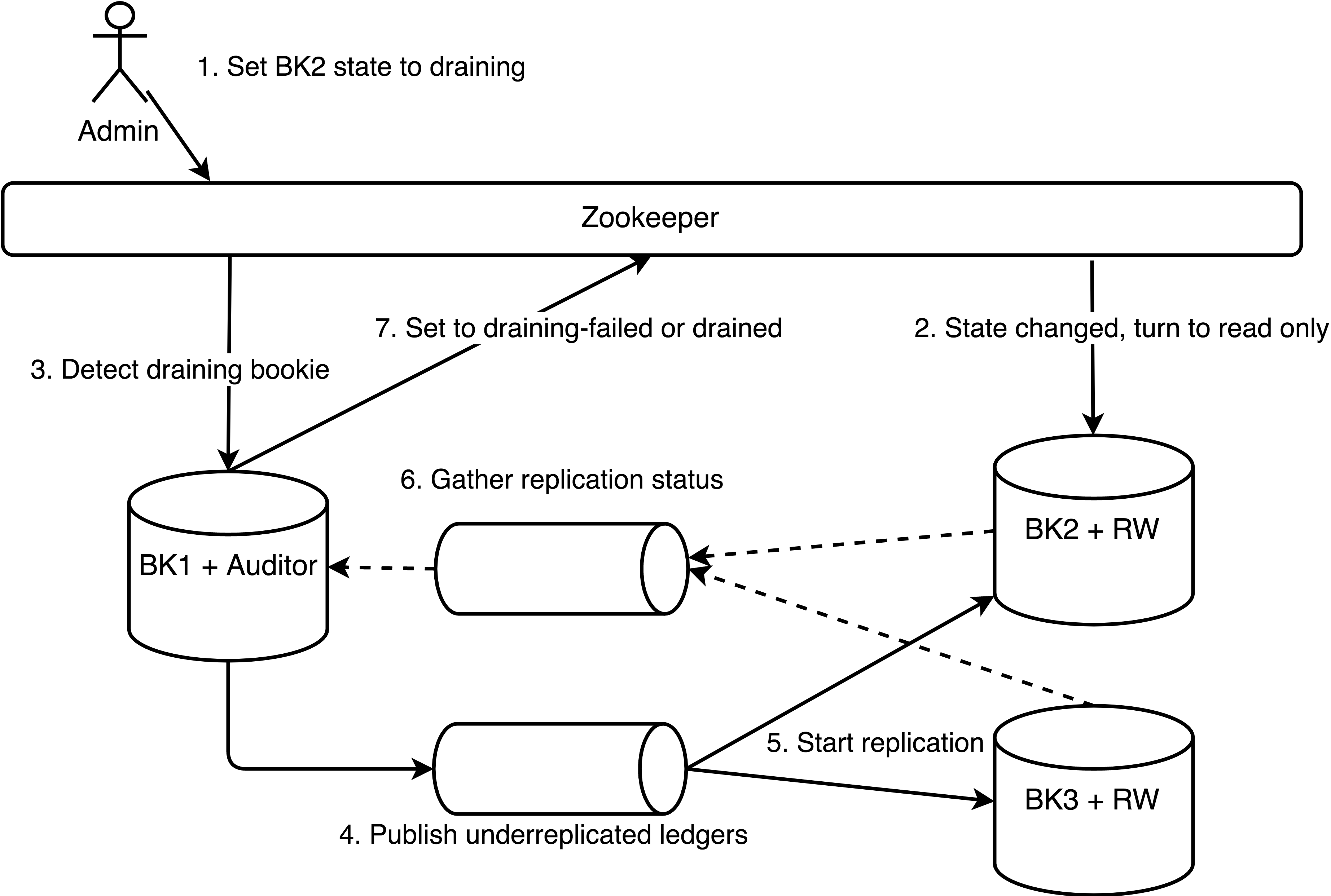

What will happen when we set a bookie to draining?

- Set a bookie to draining state would modify the state on ZK

- Each bookie would listen on its own lifecycle state, the bookie detect state change and turn to read only

- Auditor periodic scan would detect a draining bookie is already in read only

- Auditor publish the ledgers on draining bookie to underreplicated ledger pool.

- ReplicationWorker keep polling from the underreplicated ledger pool and start replicating the ledgers

- ReplicationWorker report replication success or failure for each ledger in some way

- Auditor gather the replication status and decider whether it's time to set the state to draining-failed or drained.

We have two ways to run Auditor and ReplicationWorker. We can either run within the bookie or outside of the bookkeeper cluster.

The following graph shows what roughly would happen when a set a bookie from Active to Draining state.

Note: The queue in the following graphs can be any abstract queue (ledger, zookeeper)

Scenario 1 - Run Auditor & ReplicationWorker on Bookie:

Scenario 2 - Run Auditor and ReplicationWorker in a separate job:

What shall we do with a drained bookie?

A drained bookie is safe to be removed from the cluster at any time. We need to delete the cookie and clear the lifecycle state before removing the bookie out of the cluster, otherwise, when the bookie comes back, it will still be in draining state, which means it can only serve in read-only mode.

Bookkeeper Lifecycle Automation

Introducing new Bookkeeper Lifecycle State would reduce the chance of data loss doing operation and reduce some operation pain. However, it doesn't address the issue of operation automation.

This is why we want to introduce a HTTP management endpoint for Bookkeeper.

- Manage Lifecycle State

- Manage Serving state

- Manage Cookie

- Register callback for state change

- Report bad ledgers or data loss

Where do we run HTTP server?

Actions

TBA