The BookKeeper journal manager is an implementation of the HDFS JournalManager interface. The JournalManager interface allows you to plug custom write ahead logging into the HDFS NameNode.

Setting up the BookKeeper Journal Manager

http://zookeeper.apache.org/bookkeeper/docs/r4.0.0/bookkeeperConfig.html covers the step necessary to set up a BookKeeper cluster. We recommend that you use at least 3 servers to ensure fault tolerance.

Once BookKeeper has been set up, you can configure the journal manager. Currently, JournalManagers are only available in trunk and 0.23.3 branches. BookKeeper JournalManager is available in trunk, but the most recent version is at https://github.com/ivankelly/hadoop-common/tree/BKJM-benching. All the changes in this branch are pending submission into trunk. For this guide we will use this branch.

Pull HDFS trunk from github and compile.

~ $ git clone git://github.com/ivankelly/hadoop-common.git ~ $ cd hadoop-common ~/hadoop-common $ mvn package -Pdist -DskipTests

Once compiled, you must configure the namenode.

~/hadoop-common $ export HADOOP_COMMON_HOME=$(pwd)/$(ls -d hadoop-common-project/hadoop-common/target/hadoop-common-*-SNAPSHOT) ~/hadoop-common $ export HADOOP_HDFS_HOME=$(pwd)/$(ls -d hadoop-hdfs-project/hadoop-hdfs/target/hadoop-hdfs-*-SNAPSHOT) ~/hadoop-common $ export PATH=$HADOOP_COMMON_HOME/bin:$HADOOP_HDFS_HOME/bin:$PATH

Copy the BookKeeperJournalManager jar into the HDFS lib directory.

~/hadoop-common $ cp hadoop-hdfs-project/hadoop-hdfs/src/contrib/bkjournal/target/hadoop-hdfs-bkjournal-0.24.0-SNAPSHOT.jar $HADOOP_HDFS_HOME/share/hadoop/hdfs/lib/

Edit $HADOOP_COMMON_HOME/etc/hadoop/hdfs-site.xml and add the following configuration.

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://localhost/</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///tmp/bk-nn-local-snapshot</value>

</property>

<property>

<name>dfs.namenode.edits.dir</name>

<value>file:///tmp/bk-nn-local-edits,bookkeeper://localhost:2181/hdfsjournal</value>

</property>

<property>

<name>dfs.namenode.edits.journal-plugin.bookkeeper</name>

<value>org.apache.hadoop.contrib.bkjournal.BookKeeperJournalManager</value>

</property>

</configuration>

This will configure the namenode with 1 local edits dir and 1 bookkeeper edits dir. The BookKeeperJournalManager connects to localhost:2181 as the ZooKeeper server. Note that this will not start the namenode in high availability mode, but BookKeeperJournalManager can similarly be used as a shared edits dir in HA.

The namenode is now ready to start.

~/hadoop-common $ hdfs namenode -format ~/hadoop-common $ hdfs namenode

The web interface should now be available at http://localhost:50070/.

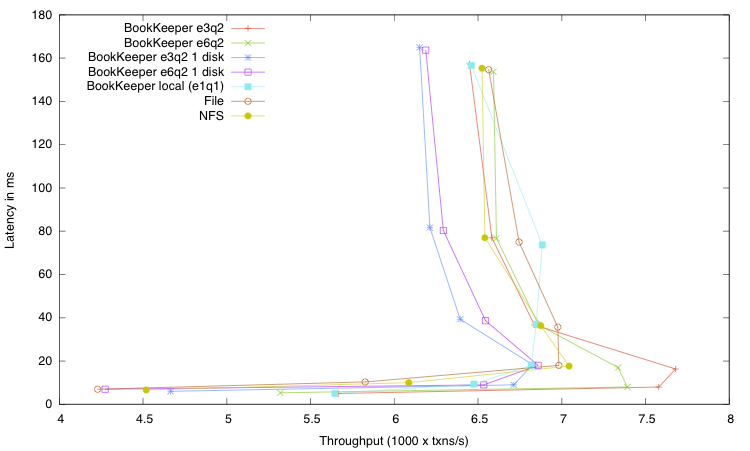

Benchmarks

We ran benchmarks on a cluster of machines with:

- 2x Quad Xeon 2.5 Ghz

- 16GB RAM

- 4x 7200RPM Sata disks

- Gigabit ethernet

The benchmark we ran was NNThroughputBenchmark with a workload to create 200000 files. The number of threads was varied across the run, from 32 to 1024.

Each configuration was run 3 times and the average taken. The following configurations were used:

Key |

Description |

|---|---|

BookKeeper e3q2 |

BookKeeper journal manager, with an ensemble of 3 and quorum of 2, each Bookie using separate disk for journal and ledger. |

BookKeeper e6q2 |

BookKeeper journal manager, with an ensemble of 3 and quorum of 2, each Bookie using separate disk for journal and ledger. |

BookKeeper e3q2 1 disk |

BookKeeper journal manager, with an ensemble of 3 and quorum of 2, each Bookie using single disk for journal and ledger. |

BookKeeper e6q2 1 disk |

BookKeeper journal manager, with an ensemble of 3 and quorum of 2, each Bookie using single disk for journal and ledger. |

BookKeeper local (q1e1) |

BookKeeper journal manager, with a single Bookie on the same machine as the namenode, using single disk for journal and ledger. |

File |

File journal manager with a local file on a dedicated disk |

NFS |

File journal manager with a file stored on enterprise NetApp Filer |