Status

Current state: Under Discussion

Discussion thread: https://lists.apache.org/thread.html/70484d6aa4b8e7121181ed8d5857a94bfb7d5a76334b9c8fcc59700c@%3Cdev.flink.apache.org%3E

JIRA:

FLINK-10740

-

Getting issue details...

STATUS

Released: <Flink Version>

Please keep the discussion on the mailing list rather than commenting on the wiki (wiki discussions get unwieldy fast).

Motivation

This FLIP aims to solve several problems/shortcomings in the current streaming source interface (SourceFunction) and simultaneously to unify the source interfaces between the batch and streaming APIs. The shortcomings or points that we want to address are:

- One currently implements different sources for batch and streaming execution.

- The logic for "work discovery" (splits, partitions, etc) and actually "reading" the data is intermingled in the

SourceFunctioninterface and in theDataStreamAPI, leading to complex implementations like the Kafka and Kinesis source. - Partitions/shards/splits are not explicit in the interface. This makes it hard to implement certain functionalities in a source-independent way, for example event-time alignment, per-partition watermarks, dynamic split assignment, work stealing. For example, the Kafka source supports per-partition watermarks, the Kinesis source doesn't. Neither source supports event-time alignment (selectively reading from splits to make sure that we advance evenly in event time).

- The checkpoint lock is "owned" by the source function. The implementation has to ensure to make element emission and state update under the lock. There is no way for Flink to optimize how it deals with that lock.

The lock is not a fair lock. Under lock contention, some thready might not get the lock (the checkpoint thread).

This also stands in the way of a lock-free actor/mailbox style threading model for operators. - There are no common building blocks, meaning every source implements a complex threading model by itself. That makes implementing and testing new sources hard, and adds a high bar to contributing to existing sources .

Overall Design

There are several key aspects in the design, which are discussed in each section.

Separating Work Discovery from Reading

The sources have two main components:

- SplitEnumerator: Discovers and assigns splits (files, partitions, etc.)

- Reader: Reads the actual data from the splits.

The SplitEnumerator is similar to the old batch source interface's functionality of creating splits and assigning splits. It runs only once, not in parallel (but could be thought of to parallelize in the future, if necessary).

It might run on the JobManager or in a single task on a TaskManager (see below "Where to run the Enumerator").

Example:

- In the File Source , the

SplitEnumeratorlists all files (possibly sub-dividing them into blocks/ranges). - For the Kafka Source, the

SplitEnumeratorfinds all Kafka Partitions that the source should read from.

- In the File Source , the

The Reader reads the data from the assigned splits. The reader encompasses most of the functionality of the current source interface.

Some readers may read a sequence of bounded splits after another, some may ready multiple (unbounded) splits in parallel.

This separation between enumerator and reader allows mixing and matching different enumeration strategies with split readers. For example, the current Kafka connector has different strategies for partition discovery that are intermingled with the rest of the code. With the new interfaces in place, we would only need one split reader implementation and there could be several split enumerators for the different partition discovery strategies.

With these two components encapsulating the core functionality, the main Source interface itself is only a factory for creating split enumerators and readers.

Batch and Streaming Unification

Each source should be able to work as a bounded (batch) and as an unbounded (continuous streaming) source.

The actual decision whether it becomes bounded or unbounded is made in the DataStream API when creating the source stream.

The Boundedness is a property that is passed to source when creating the SplitEnumerator. The readers should be agnostic to this distinction, they will simply read the splits assigned to them. Whether a source is bounded or unbounded is passed to the SplitEnumerator upon creation.

That way, we can also make the API type safe in the future, when we can explicitly model bounded streams.

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

FileSource<MyType> theSource = new ParquetFileSource("fs:///path/to/dir", AvroParquet.forSpecific(MyType.class));

DataStream<MyType> stream = env.continuousSource(theSource);

DataStream<MyType> boundedStream = env.boundedSource(theSource);

// this would be an option once we add bounded streams to the DataStream API

BoundedDataStream<MyType> batch = env.boundedSource(theSource);

Examples

FileSource

- For "bounded input", it uses a

SplitEnumeratorthat enumerates once all files under the given path. - For "continuous input", it uses a

SplitEnumeratorthat periodically enumerates once all files under the given path and assigns the new ones.

KafkaSource

- For "bounded input", it uses a

SplitEnumeratorthat lists all partitions and gets the latest offset for each partition and attaches that as the "end offset" to the split. - For "continuous input", it uses a

SplitEnumeratorthat lists all partitions and attaches LONG_MAX as the "end offset" to each split. - The source may have another option to periodically discover new partitions. That would only be applicable to the "continuous input".

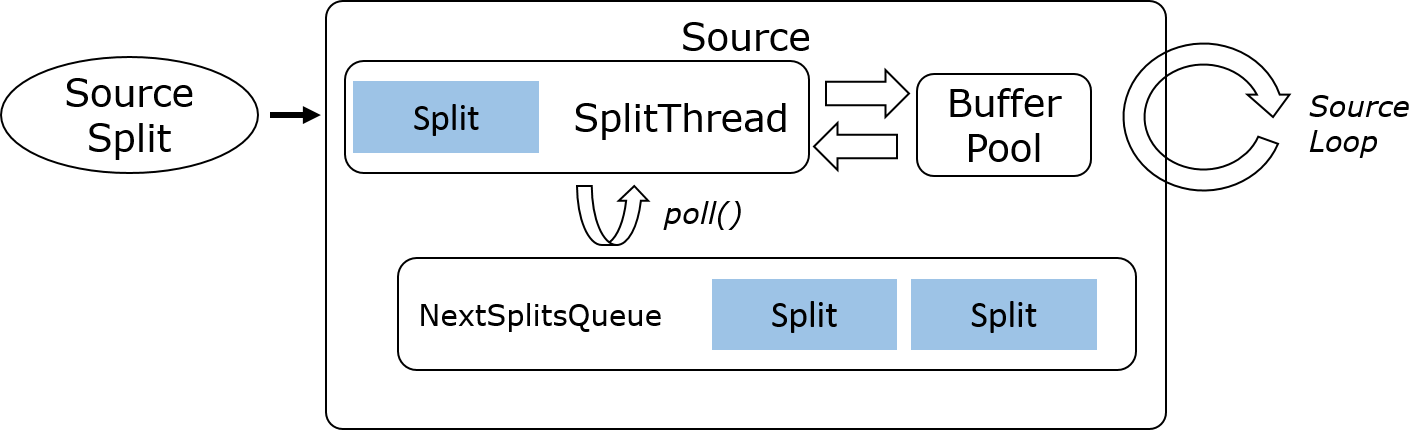

Reader Interface and Threading Model

The reader need to fulfill the following properties:

- No closed work loop, so it does not need to manage locking

- Non-blocking progress methods, to it supports running in an actor/mailbox/dispatcher style operator

- All methods called by the same on single thread, so implementors need not deal with concurrency

- Watermark / Event time handling abstracted to be extensible for split-awareness and alignment (see below sections "Per Split Event-time" and "Event-time Alignment")

- All readers should naturally supports state and checkpoints

- Watermark generation should be circumvented for batch execution

The following core aspects give us these properties:

- Splits are both the type of work assignment and the type of state held by the source. Assigning a split or restoring a split from a checkpoint is the same to the reader.

- Advancing the reader is a non-blocking call that returns a future.

- We build higher-level primitives on top of the main interface (see below "High-level Readers")

- We hide event-time / watermarks in the

SourceOutputand pass different source contexts for batch (no watermarks) and streaming (with watermarks).

TheSourceOutputalso abstract the per-partition watermark tracking.

interface SourceReader {

void start() throws IOException;

CompletableFuture<?> available() throws IOException;

ReaderStatus emitNext(SourceOutput<E> output) throws IOException;

void addSplits(List<SplitT> splits) throws IOException;

List<SplitT> snapshotState();

}

The implementation assumes that there is a single thread that drives the source. This single thread calls emitNext(...) when data is available and cedes execution when nothing is available. It also handles checkpoint triggering, timer callbacks, etc. thus making the task lock free.

The thread is expected to work on some form of mailbox, in which the data emitting loop is one possible task (next to timers, checkpoints, ...). Below is a very simple pseudo code for the driver loop.

This type of hot loop makes sure we do not use the future unless the reader is temporarily out of data, and we bypass the opportunistically bypass the mailbox for performance (mailbox will need some amount of synchronization).

final BlockingQueue<Runnable> mailbox = new LinkedBlockingQueue<>();

final SourceReader<T> reader = ...;

final Runnable readerLoop = new Runnable() {

public void run() {

while (true) {

ReaderStatus status = reader.emitNext(output);

if (status == MORE_AVAILABLE) {

if (mailbox.isEmpty()) {

continue;

}

else {

addReaderLoopToMailbox();

break;

}

}

else if (status == NOTHING_AVAILABLE) {

reader.available().thenAccept((ignored) -> addReaderLoopToMailbox());

break;

}

else if (status == END_OF_SPLIT_DATA) {

break;

}

}

};

void addReaderLoopToMailbox() {

mailbox.add(readerLoop);

}

void triggerCheckpoint(CheckpointOptions options) {

mailbox.add(new CheckpointAction(options));

}

void taskMainLoop() {

while (taskAlive) {

mailbox.take().run();

}

}

}

High-level Readers

The core source interface (the lowest level interface) is very generic. That makes it flexible, but hard to implement for contributors, especially for sufficiently complex reader patterns like in Kafka or Kinesis.

In general, most I/O libraries used for connectors are not asynchronous, and would need to spawn an I/O thread to make them non-blocking for the main thread.

We propose to solve this by building higher level source abstractions that offer simpler interfaces that allow for blocking calls.

These higher level abstractions would also solve the issue of sources that handle multiple splits concurrently, and the per-split event time logic.

Most readers fall into one of the following categories:

- Sequential Single Split (File, database query, most bounded splits)

- Multi-split multiplexed (Kafka, Pulsar, Pravega, ...)

- Multi-split multi-threaded (Kinesis, ...)

Sequential Single Split | Multi-split Multiplexed | Multi-split Multi-threaded |

Most of the readers implemented against these higher level building blocks would only need to implement an interface similar to this. The contract would also be that all methods except wakeup() would be called by the same thread, obviating the need for any concurrency handling in the connector.

interface SplitReader<RecordsT> extends Closeable {

@Nullable

RecordsT fetchNextRecords(Duration timeout) throws IOException;

void wakeup();

}

Per Split Event Time

TBD.

Event Time Alignment

TBD.

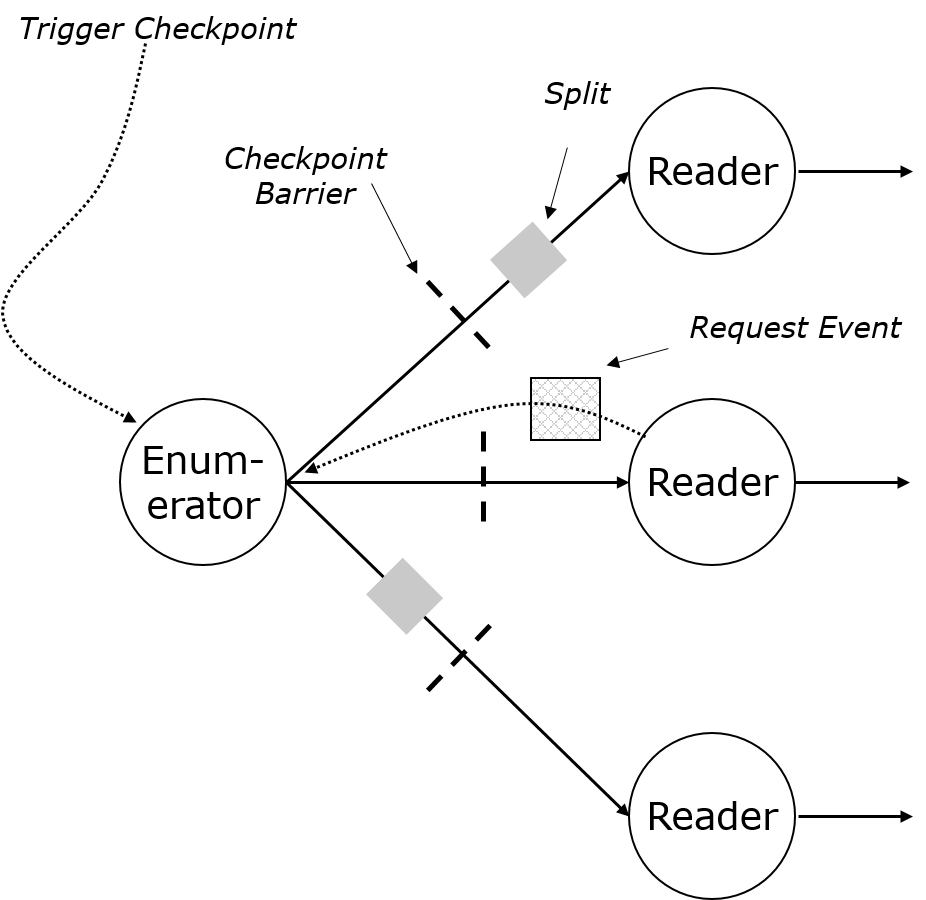

Where to Run the Enumerator

The communication of splits between the Enumerator and the SourceReader has specific requirements:

- Lazy / pull-based assignment: Only when a reader requests the next split should the enumerator send a split. That results in better load-balancing

- Payload on the "pull" message, to communicate information like "location" from the SourceReader to SplitEnumerator, thus supporting features like locality-aware split assignment.

- Exactly-once fault tolerant with checkpointing: A split is sent to the reader once. A split is either still part of the enumerator (and its checkpoint) or part of the reader or already complete.

- Exactly-once between checkpoints (and without checkpointing): Between checkpoints (and in the absence of checkpoints), the splits that were assigned to readers must be re-added to the enumerator upon failure / recovery.

- Communication channel must not connect tasks into a single failover region

Given these requirements, there would be two options to implement this communication.

Option 1: Enumerator on the TaskManager

The SplitEnumerator runs as a task with parallelism one. Downstream of the enumerator are the SourceReader tasks, which run in parallel. Communication goes through the regular data streams.

The readers request splits by sending "backwards events", similar to "request partition" or the "superstep synchronization" in the batch iterations. These are not exposed in operators, but tasks have access to them.

The task reacts to the backwards events: Only upon an event will it send a split. That gives us lazy/pull-based assignment. Payloads on the request backwards event messages (for example for locality awareness) is possible.

Checkpoints and splits are naturally aligned, because splits go through the data channels. The enumerator is effectively the only entry task from the source, and the only one that receives the "trigger checkpoint" RPC call.

The network connection between enumerator and split reader is treated by the scheduler as a boundary of a failover region.

To decouple the enumerator and reader restart, we need one of the following mechanisms:

- Pipelined persistent channels: The contents of a channel is persistent between checkpoints. A receiving task requests the data "after checkpoint X". The data is pruned when checkpoint X+1 is completed.

When a reader fails, the recovered reader task can reconnect to the stream after the checkpoint and will get the previously assigned splits. Batch is a special case, if there are no checkpoints, then the channel holds all data since the beginning.- Pro: The "pipelined persistent channel" has also applications beyond the enumerator to reader connection.

- Con: Splits always go to the same reader and cannot be distributed across multiple readers upon recovery. Especially for batch programs, this may create bad stragglers during recovery.

- Reconnects and task notifications on failures:The enumerator task needs to remember the splits assigned to each result partition until the next checkpoint completes. The enumerator task would have to be notified of the failure of a downstream task and add the splits back to the enumerator. Recovered reader tasks would simply reconnect and get a new stream.

- Pro: Re-distribution of splits across all readers upon failure/recovery (no stragglers).

- Con: Breaks abstraction that separates task and network stack.

Option 2: Enumerator on the JobManager

Similar to the current batch (DataSet) input spit assigner, the SplitEnumerator code runs in the JobManager, as part of an ExecutionJobVertex. To support periodic split discovery, the enumerator has to be periodically called from an additional thread.

The readers request splits via an RPC message and the enumerator responds via RPC. RPC messages carry payload for information like location.

Extra care needs to me taken to align the split assignment messages with checkpoint barriers. If we start to support metadata-based watermarks (to handle event time consistently when dealing with collections of bounded splits), we need to support that as well through RPC and align it with the input split assignment.

The enumerator creates its own piece of checkpoint state when a checkpoint is triggered.

Critical parts here are the added complexity on the master (ExecutionGraph) and the checkpoints. Aligning them properly with RPC messages is possible when going through the now single threaded execution graph dispatcher thread, but to support asynchronous checkpoint writing requires more complexity.

Open Questions

In both cases, the enumerator is a point of failure that requires a restart of the entire dataflow.

To circumvent that, we probably need an additional mechanism, like a write-ahead log for split assignment.

Comparison between Options

| Criterion | Enumerate on Task | Enumerate on JobManager |

|---|---|---|

Encapsulation of Enumerator | Encapsulation in separate Task | Additional complexity in ExecutionGraph |

| Network Stack Changes | Significant changes. Some are more clear, like reconnecting. Some seem to break abstractions, like notifying tasks of downstream failures. | No Changes necessary |

| Scheduler / Failover Region | Minor changes | No changes necessary |

| Checkpoint alignment | No changes necessary (splits are data messages, naturally align with barriers) | Careful coordination between split assignment and checkpoint triggering. Might be simple if both actions are run in the single-threaded ExecutionGraph thread. |

| Watermarks | No changes necessary (splits are data messages, watermarks naturally flow) | Watermarks would go through ExecutionGraph |

| Checkpoint State | No additional mechanism (only regular task state) | Need to add support for asynchronous non-metadata state on the JobManager / ExecutionGraph |

| Supporting graceful Enumerator recovery (avoid full restarts) | Network reconnects (like above), plus write-ahead of split | Tracking split assignment between checkpoints, plus |

Personal opinion from Stephan: If we find an elegant was to abstract the network stack changes, I would lean towards running the Enumerator in a Task, not on the JobManager.

Core Public Interfaces

Source

public interface Source<T, SplitT extends SourceSplit, EnumChkT> extends Serializable {

/**

* Checks whether the source supports the given boundedness.

*

* <p>Some sources might only support either continuous unbounded streams, or

* bounded streams.

*/

boolean supportsBoundedness(Boundedness boundedness);

/**

* Creates a new reader to read data from the spits it gets assigned.

* The reader starts fresh and does not have any state to resume.

*/

SourceReader<T, SplitT> createReader(SourceContext ctx) throws IOException;

/**

* Creates a new SplitEnumerator for this source, starting a new input.

*/

SplitEnumerator<SplitT, EnumChkT> createEnumerator(Boundedness mode) throws IOException;

/**

* Restores an enumerator from a checkpoint.

*/

SplitEnumerator<SplitT, EnumChkT> restoreEnumerator(Boundedness mode, EnumChkT checkpoint) throws IOException;

// ------------------------------------------------------------------------

// serializers for the metadata

// ------------------------------------------------------------------------

/**

* Creates a serializer for the input splits. Splits are serialized when sending them

* from enumerator to reader, and when checkpointing the reader's current state.

*/

SimpleVersionedSerializer<SplitT> getSplitSerializer();

/**

* Creates the serializer for the {@link SplitEnumerator} checkpoint.

* The serializer is used for the result of the {@link SplitEnumerator#snapshotState()}

* method.

*/

SimpleVersionedSerializer<EnumChkT> getEnumeratorCheckpointSerializer();

}

/**

* The boundedness of the source: "bounded" for the currently available data (batch style),

* "continuous unbounded" for a continuous streaming style source.

*/

public enum Boundedness {

/**

* A bounded source processes the data that is currently available and will end after that.

*

* <p>When a source produces a bounded stream, the runtime may activate additional optimizations

* that are suitable only for bounded input. Incorrectly producing unbounded data when the source

* is set to produce a bounded stream will often result in programs that do not output any results

* and may eventually fail due to runtime errors (out of memory or storage).

*/

BOUNDED,

/**

* A continuous unbounded source continuously processes all data as it comes.

*

* <p>The source may run forever (until the program is terminated) or might actually end at some point,

* based on some source-specific conditions. Because that is not transparent to the runtime,

* the runtime will use an execution mode for continuous unbounded streams whenever this mode

* is chosen.

*/

CONTINUOUS_UNBOUNDED

}

Reader

(see above)

Split Enumerator

public interface SplitEnumerator<SplitT, CheckpointT> extends Closeable {

/**

* Returns true when the input is bounded and no more splits are available.

* False means that the definite end of input has been reached, and is only possible

* in bounded sources.

*/

boolean isEndOfInput();

/**

* Returns the next split, if it is available. If nothing is currently available, this returns

* an empty Optional.

* More may be available later, if the {@link #isEndOfInput()} is false.

*/

Optional<SplitT> nextSplit(ReaderLocation reader);

/**

* Adds splits back to the enumerator. This happens when a reader failed and restarted,

* and the splits assigned to that reader since the last checkpoint need to be made

* available again.

*/

void addSplitsBack(List<SplitT> splits);

/**

* Checkpoints the state of this split enumerator.

*/

CheckpointT snapshotState();

/**

* Called to close the enumerator, in case it holds on to any resources, like threads or

* network connections.

*/

@Override

void close() throws IOException;

}

public interface PeriodicSplitEnumerator<SplitT, CheckpointT> extends SplitEnumerator<SplitT, CheckpointT> {

/**

* Called periodically to discover further splits.

*

* @return Returns true if further splits were discovered, false if not.

*/

boolean discoverMoreSplits() throws IOException;

/**

* Continuous enumeration is only applicable to unbounded sources.

*/

default boolean isEndOfInput() {

return false;

}

}

StreamExecutionEnvironment

public class StreamExecutionEnvironment {

...

public <T> DataStream<T> continuousSource(Source<T, ?, ?> source) {...}

public <T> DataStream<T> continuousSource(Source<T, ?, ?> source, TypeInformation<T> type) {...}

public <T> DataStream<T> boundedSource(Source<T, ?, ?> source) {...}

public <T> DataStream<T> boundedSource(Source<T, ?, ?> source, TypeInformation<T> type) {...}

...

}

SourceOutput and Watermarking

public interface SourceOutput<E> extends WatermarkOutput {

void emitRecord(E record);

void emitRecord(E record, long timestamp);

}

/**

* An output for watermarks. The output accepts watermarks and idleness (inactivity) status.

*/

public interface WatermarkOutput {

/**

* Emits the given watermark.

*

* <p>Emitting a watermark also ends previously marked idleness.

*/

void emitWatermark(Watermark watermark);

/**

* Marks this output as idle, meaning that downstream operations do not

* wait for watermarks from this output.

*

* <p>An output becomes active again as soon as the next watermark is emitted.

*/

void markIdle();

}

Implementation Plan

The implementation should proceed in the following steps, some of which can proceed concurrently.

- Validate the interface proposal by implementing popular connectors of different patterns:

- FileSource

- For a row-wise format (splittable within files, checkpoint offset within a split)

- For a bulk format like Parquet / Orc.

- Bounded and unbounded split enumerator

- KafkaSource

- Unbounded without dynamic partition discovery

- Unbounded with dynamic partition discovery

- Bounded

- Kinesis

- Unbounded

- FileSource

- Implement test harnesses for the high-level readers patterns

- Test their functionality of the readers implemented in (1)

- Implement a new

SourceReaderTaskand implement the single-threaded mailbox logic - Implement

SourceEnumeratorTask Implement the changes to network channels and scheduler, or to RPC service and checkpointing, to handle split assignment and checkpoints and re-adding splits.

Compatibility, Deprecation, and Migration Plan

In the DataStream API, we mark the existing source interface as deprecated but keep it for a few releases.

The new source interface is supported by different stream operators, so the two source interfaces can easily co-exist for a while.

We do not touch the DataSet API, which will be eventually subsumed by the DataStream API anyways.

Test Plan

TBD.

Previous Versions

Public Interfaces

We propose a new Source interface along with two companion interfaces SplitEnumerator and SplitReader:

public interface Source<T, SplitT, EnumeratorCheckpointT> extends Serializable {

TypeSerializer<SplitT> getSplitSerializer();

TypeSerializer<T> getElementSerializer();

TypeSerializer<EnumeratorCheckpointT> getEnumeratorCheckpointSerializer();

EnumeratorCheckpointT createInitialEnumeratorCheckpoint();

SplitEnumerator<SplitT, EnumeratorCheckpointT> createSplitEnumerator(EnumeratorCheckpointT checkpoint);

SplitReader<T, SplitT> createSplitReader(SplitT split);

}

public interface SplitEnumerator<SplitT, CheckpointT> {

Iterable<SplitT> discoverNewSplits();

CheckpointT checkpoint();

}

public interface SplitReader<T, SplitT> {

/**

* Initializes the reader and advances to the first record. Returns true

* if a record was read. If no record was read, records might still be

* available for reading in the future.

*/

boolean start() throws IOException;

/**

* Advances to the next record. Returns true if a record was read. If no

* record was read, records might still be available for reading in the future.

*

* <p>This method must return as fast as possible and not block if no records

* are available.

*/

boolean advance() throws IOException;

/**

* Returns the current record.

*/

T getCurrent() throws NoSuchElementException;

long getCurrentTimestamp() throws NoSuchElementException;

long getWatermark();

/**

* Returns a checkpoint that represents the current reader state. The current

* record is not the responsibility of the reader, it is assumed that the

* component that uses the reader is responsible for that.

*/

SplitT checkpoint();

/**

* Returns true if reading of this split is done, i.e. there will never be

* any available records in the future.

*/

boolean isDone() throws IOException;

/**

* Shuts down the reader.

*/

void close() throws IOException;

}

The Source interface itself is really only a factory for creating split enumerators and split readers. A split enumerator is responsible for detecting new partitions/shards/splits while a split reader is responsible for reading from one split. This separates the concerns and allows putting the enumeration in a parallelism-one operation or outside the execution graph. And also gives Flink more possibilities to decide how processing of splits should be scheduled.

This also potentially allows mixing and matching different enumeration strategies with split readers. For example, the current Kafka connector has different strategies for partition discovery that are intermingled with the rest of the code. With the new interfaces in place, we would only need one split reader implementation and there could be several split enumerators for the different partition discovery strategies.

A naive implementation prototype that implements this in user space atop the existing Flink operations is given here: https://github.com/aljoscha/flink/commits/refactor-source-interface. This also comes with a complete Kafka source implementation that already supports checkpointing.

Proposed Changes

As an MVP, we propose to add the new interfaces and a runtime implementation using the existing SourceFunction for running the enumerator along with a special operator implementation for running the split reader. As a next step, we can add a dedicated StreamTask implementation for both the enumerator and reader to take advantage of the additional optimization potential. For example, more efficient handling of the checkpoint lock.

The next steps would be to implement event-time alignment.

Compatibility, Deprecation, and Migration Plan

- The new interface and new source implementations will be provided side-by-side to the existing sources, thus not breaking existing programs.

- We can think about allowing migrating existing jobs/savepoints smoothly to the new interface but it is a secondary concern.